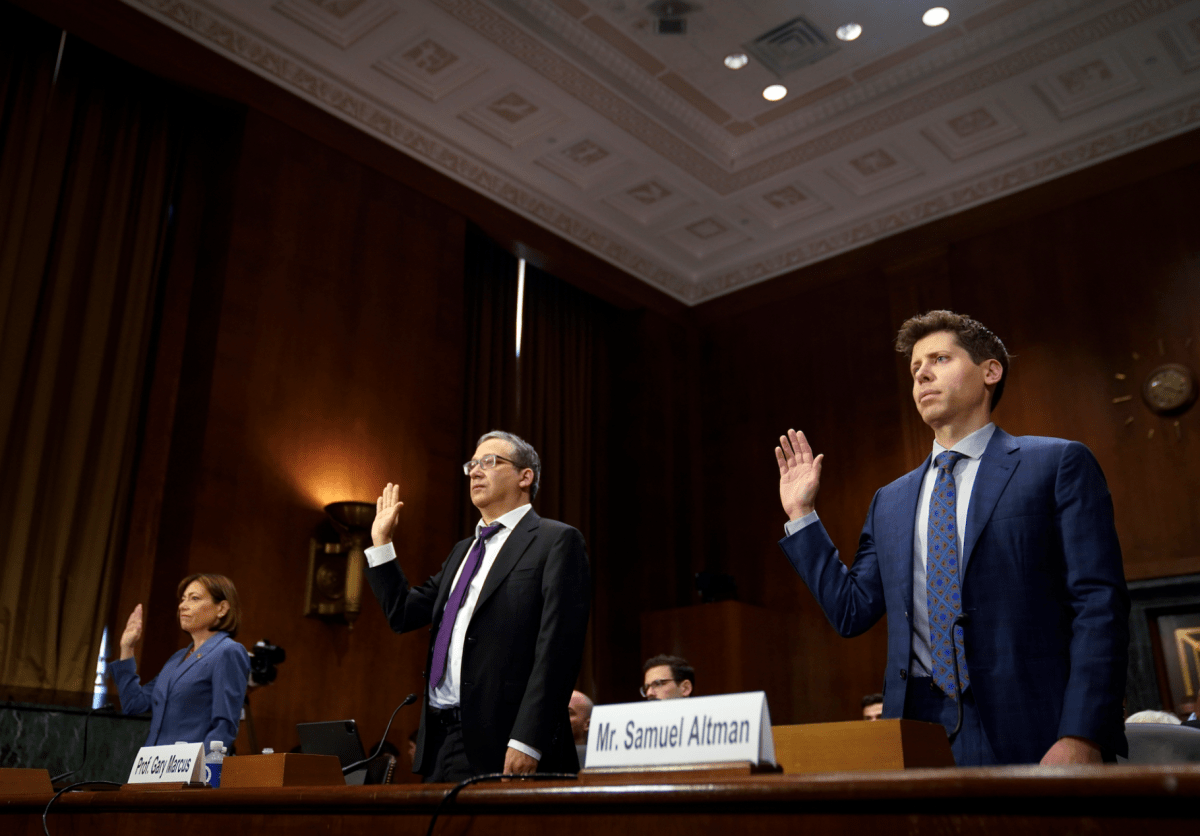

On Tuesday of this week, neuroscientist, founder and creator Gary Marcus sat between OpenAI CEO Sam Altman and Christina Montgomery, who’s IBM’s chief privateness belief officer, as all three testified earlier than the Senate Judiciary Committee for over three hours. The senators have been largely centered on Altman as a result of he runs one of the vital highly effective firms on the planet in the meanwhile, and since Altman has repeatedly requested them to assist regulate his work. (Most CEOs beg Congress to go away their trade alone.)

Although Marcus has been identified in tutorial circles for a while, his star has been on the rise recently because of his e-newsletter (“The Highway to A.I. We Can Belief“), a podcast (“People vs. Machines“), and his relatable unease across the unchecked rise of AI. Along with this week’s listening to, for instance, he has this month appeared on Bloomberg tv and been featured within the New York Occasions Sunday Journal and Wired amongst different locations.

As a result of this week’s listening to appeared really historic in methods — Senator Josh Hawley characterised AI as “one of the vital technological improvements in human historical past,” whereas Senator John Kennedy was so charmed by Altman that he requested Altman to choose his personal regulators — we wished to speak with Marcus, too, to debate the expertise and see what he is aware of about what occurs subsequent.

Are you continue to in Washington?

I’m nonetheless in Washington. I’m assembly with lawmakers and their employees and varied different fascinating individuals and attempting to see if we are able to flip the sorts of issues that I talked about into actuality.

You’ve taught at NYU. You’ve co-founded a few AI firms, together with one with famed roboticist Rodney Brooks. I interviewed Brooks on stage again in 2017 and he stated then he didn’t suppose Elon Musk actually understood AI and that he thought Musk was mistaken that AI was an existential menace.

I believe Rod and I share skepticism about whether or not present AI is something like synthetic normal intelligence. There are a number of points you must take aside. One is: are we near AGI and the opposite is how harmful is the present AI we’ve got? I don’t suppose the present AI we’ve got is an existential menace however that it’s harmful. In some ways, I believe it’s a menace to democracy. That’s not a menace to humanity. It’s not going to annihilate all people. Nevertheless it’s a fairly severe danger.

Not so way back, you have been debating Yann LeCun, Meta’s chief AI scientist. I’m undecided what that flap was about – the true significance of deep studying neural networks?

So LeCun and I’ve really debated many issues for a few years. We had a public debate that David Chalmers, the thinker, moderated in 2017. I’ve been attempting to get [LeCun] to have one other actual debate ever since and he received’t do it. He prefers to subtweet me on Twitter and stuff like that, which I don’t suppose is essentially the most grownup method of getting conversations, however as a result of he is a vital determine, I do reply.

One factor that I believe we disagree about [currently] is, LeCun thinks it’s effective to make use of these [large language models] and that there’s no doable hurt right here. I believe he’s extraordinarily mistaken about that. There are potential threats to democracy, starting from misinformation that’s intentionally produced by dangerous actors, from unintentional misinformation – just like the legislation professor who was accused of sexual harassment despite the fact that he didn’t commit it – [to the ability to] subtly form individuals’s political views primarily based on coaching information that the general public doesn’t even know something about. It’s like social media, however much more insidious. You too can use these instruments to govern different individuals and possibly trick them into something you need. You possibly can scale them massively. There’s undoubtedly dangers right here.

You stated one thing fascinating about Sam Altman on Tuesday, telling the senators that he didn’t inform them what his worst concern is, which you referred to as “germane,” and redirecting them to him. What he nonetheless didn’t say is something having to do with autonomous weapons, which I talked with him about a couple of years in the past as a high concern. I believed it was fascinating that weapons didn’t come up.

We lined a bunch of floor, however there are many issues we didn’t get to, together with enforcement, which is de facto vital, and nationwide safety and autonomous weapons and issues like that. There shall be a number of extra of [these].

Was there any discuss of open supply versus closed techniques?

It hardly got here up. It’s clearly a extremely difficult and fascinating query. It’s actually not clear what the precise reply is. You need individuals to do impartial science. Possibly you wish to have some form of licensing round issues which might be going to be deployed at very massive scale, however they carry specific dangers, together with safety dangers. It’s not clear that we wish each dangerous actor to get entry to arbitrarily highly effective instruments. So there are arguments for and there are arguments towards, and possibly the precise reply goes to incorporate permitting a good diploma of open supply but additionally having some limitations on what may be completed and the way it may be deployed.

Any particular ideas about Meta’s technique of letting its language mannequin out into the world for individuals to tinker with?

I don’t suppose it’s nice that [Meta’s AI technology] LLaMA is on the market to be trustworthy. I believe that was a little bit bit careless. And, you realize, that actually is without doubt one of the genies that’s out of the bottle. There was no authorized infrastructure in place; they didn’t seek the advice of anyone about what they have been doing, so far as I don’t know. Possibly they did, however the choice course of with that or, say, Bing, is mainly simply: an organization decides we’re going to do that.

However a number of the issues that firms determine may carry hurt, whether or not within the close to future or in the long run. So I believe governments and scientists ought to more and more have some function in deciding what goes on the market [through a kind of] FDA for AI the place, if you wish to do widespread deployment, first you do a trial. You discuss the price advantages. You do one other trial. And finally, if we’re assured that the advantages outweigh the dangers, [you do the] launch at massive scale. However proper now, any firm at any time can determine to deploy one thing to 100 million clients and have that completed with none form of governmental or scientific supervision. You need to have some system the place some neutral authorities can go in.

The place would these neutral authorities come from? Isn’t everybody who is aware of something about how this stuff work already working for a corporation?

I’m not. [Canadian computer scientist] Yoshua Bengio just isn’t. There are many scientists who aren’t working for these firms. It’s a actual fear, find out how to get sufficient of these auditors and find out how to give them incentive to do it. However there are 100,000 laptop scientists with some side of experience right here. Not all of them are working for Google or Microsoft on contract.

Would you wish to play a task on this AI company?

I’m , I really feel that no matter we construct ought to be international and impartial, presumably nonprofit, and I believe I’ve a very good, impartial voice right here that I wish to share and attempt to get us to a very good place.

What did it really feel like sitting earlier than the Senate Judiciary Committee? And do you suppose you’ll be invited again?

I wouldn’t be shocked if I used to be invited again however I don’t know. I used to be actually profoundly moved by it and I used to be actually profoundly moved to be in that room. It’s a little bit bit smaller than on tv, I suppose. Nevertheless it felt like everyone was there to attempt to do one of the best they might for the U.S. – for humanity. All people knew the load of the second and by all accounts, the senators introduced their greatest recreation. We knew that we have been there for a cause and we gave it our greatest shot.